Before starting expensive marketing campaigns, marketers often rely on running tests, which later they plan their budget on. Experimenting with new media sources helps to increase reach and other metrics. We decided to verify, how often such campaigns are efficient and what can we achieve by doing them.

Results are shared by Shani Rosenfeld, content Manager for AppsFlyer.

For analysis we selected an app based on the number of non-organic installs in at least an 18-month time span, to see the dynamics of growth and check in on the success of test campaigns.

Here is what we found out:

- 43% of the test campaigns ended successfully. Marketers need only a couple of winning metrics to direct further growth.

- The success of test campaigns was achieved by utilizing several sources of traffic, from which over 50% generated positive results in over 40% of test cases.

- Almost everything was tested: over 30% of all marketing campaigns for each app was only a test.

Always test!

In terms of digital mobile marketing campaigns, being conservative is one of the grave sins a marketer can do. You should regularly focus on how to increase reach, decrease costs, and increase revenue. The answers to that are usually found by making tests. Performing tests should be an integrated part of a modern marketer’s routine.

Also, if your advertising partners know that you are running tests, they will try even harder to enhance the results, and it will not matter if you are doing this steadily, or its part or stage of some big test, or just an action that works to compare the results with other sources. They also care about good results.

The churn rate for media people is something really painful, to the extent that they are ready to perform some additional work to reduce it or keep the client.

Tests are usually accompanied by low costs of advertisements, which also improves the cost efficiency of the tests. Each new advertising partner, which marketer ads to his budgets, compete for them with other sources, so he tries to offer the most attractive rates. It’s no surprise then that many big corporations set tests as an integrated part of their internal policy. It’s just a healthy approach towards business overall.

How did we know that campaigns were tests?

Tests can only be carried out in a single framework. Therefore, our analysis included:

- Sources of traffic, that do not generate installs to specific apps for at least the next 6 months, in an 18-month time span.

- Sources of traffic, that have data about the app in at least a 3-month time span.

- An app that was using at least 3 advertising networks and receiving at least 500 monthly app installs from all traffic sources.

Conclusion #1: 43% of test campaigns were successful

Our data tells that a big percentage of test campaigns were successful. We looked closely to those, which had data about the costs for at least 3 months, and our criteria were enlargement of traffic to the application for at least the next 2 months from the beginning of the test.

- In the case of most applications, 40-60% of test campaigns were successful. For a lesser amount of applications, the effectiveness of the tests was between 20%-40%.

- Almost every fourth application acquires even 61-80% of test campaign effectiveness.

In short, a few successful tests are enough to find a winning set up and to start scaling campaigns. The more tests you run, the more the chance of finding elements, which in the case of your application will have key meaning and influence on the level of conversion.

Huge apps have of course more resources. Their marketing specialists have experience in running test campaigns – they prepare perfectly fitted creatives, target ads to best performing/converting audiences, allocate precisely counted budgets and professionally measure the overall effect of actions. The problem for them, however, is the passage of legal and financial barriers. In the case of small apps, the situation was exactly the opposite: set up is more difficult, but it is easier to deal with financial burdens and law nature restrictions.

Conclusion #2: Success depends on the use of many media sources

To show that the success of given applications does not restrict to precise media sources, we took a closer look at their dissipation and found out the following results.

- In general, over 50% of traffic sources are successful in test campaigns in 40-60% of cases.

- Biggest traffic sources are successful in 21% to 60% of cases.

- In the case of really big apps, every fifth traffic source is successful in 61% to 80% of cases.

Conclusion#3: on average, about 30% of advertising campaigns are tests

What percentage of app promotions are test-campaigns? Let’s check out the numbers:

|

Application size |

Number of test campaigns (average) |

Participation of test campaigns |

|

Big |

6,0 |

34% |

|

Medium |

3,0 |

33% |

|

Small |

1,5 |

30% |

Data from 5025 apps using 1198 different traffic source. Test period: from 07.2916 to 12.2017

- Participation of test campaigns in general is about 30% and is almost the same in case of application size and audience group.

- Big apps have more test campaigns: they are created 2-4 times more often than small or medium apps. This is due to increased access to funds and more funds for tests.

Helpful tips

We hope that the above conclusions convinced you that testing is necessary to achieve better results of your campaigns. Based on our analysis, we prepared a few tips that may prove helpful to you if you decide to run tests campaigns for your apps.

Investigate the ground: read, test, ask colleagues, join a community. There is a lot of experienced marketers, that will share their knowledge so do not be afraid to ask questions. In addition, always check the ratings of traffic sources or research you are based on.

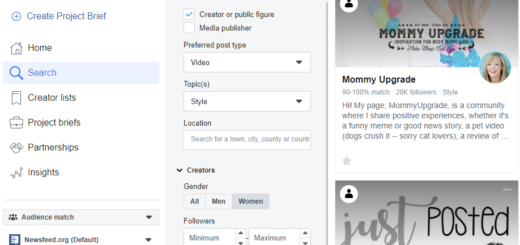

Get key information from media partners: Whether their tools are self-service or easy to manage? Whether their use of methodologies and reporting is transparent? Which part of the tools they offer is unique and represents a real added value for your business? What ad formats do they offer (make sure there is a preview option, not just template examples)?

Clarify legal issues: We recommend that you include a withdrawal clause in which it will be clearly defined when and under what pretext you can finish testing.

Use the data to define the pre-set goals: Set the minimum test parameters and analyze the results according to the “statistical art”. For example, run a test in only one country to get results that are statistically significant (ie, the chance that they are a casework will be low enough). Regardless of whether the goals are short-term (eg early stages of the purchase path, such as registration or product search), or long-term (monetization), make sure that the success reported by you is supported by reliable data. Specify specific time periods for individual traffic providers that are needed to achieve the parameters defined by you as a success.

Do not be afraid to experiment! The perfect indicators will not be waiting for you, you have to reach optimal targeting. The tests count both the quality and the number of tests carried out, so do not be discouraged, but go further. Remember that every test that is not successful is not a failure – it’s just finding the next answer to the question “how not to target our campaigns” :).

Bonus

Facebook also allows you to track events in your application, allowing you to direct ads to people who perform certain actions in it (eg registration / purchase / other specific action). More about how to connect your application with Facebook and about the possibilities of targeting inside the application, you can read more here.

Perhaps you will soon enrich your arsenal of targeting criteria with a whole new set of possibilities?