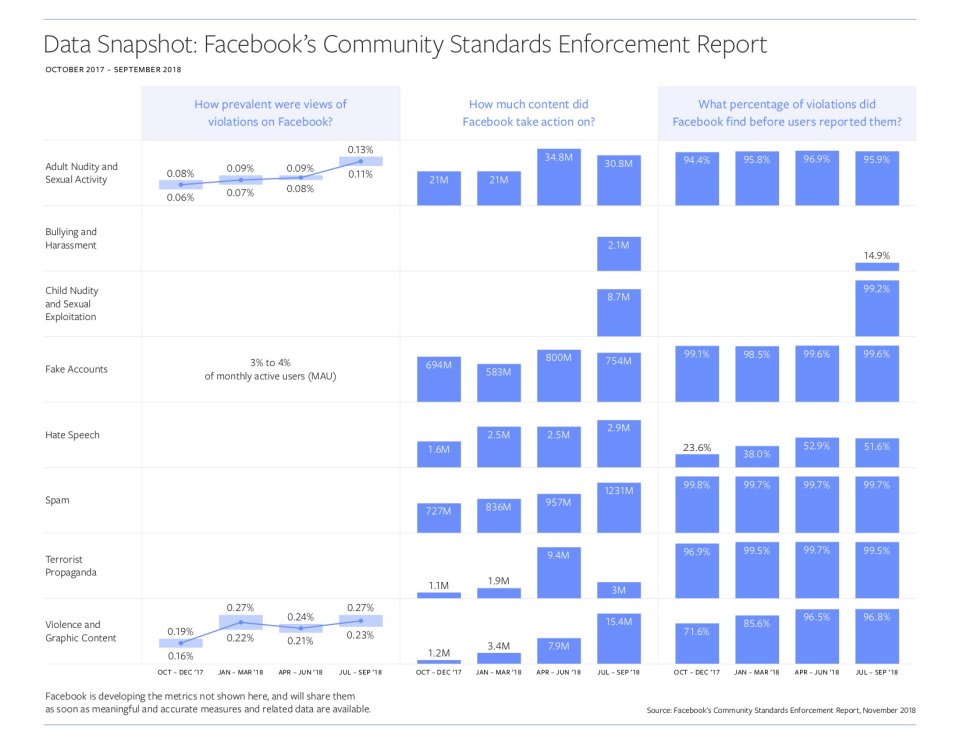

Facebook has released a report that maps the development of security measures against offensive content for the period of April through September 2018. It deals with the fight against fake accounts, hate speech, spam, violence, terrorist propaganda, nudity, sexual content, and bullying and exploitation. Facebook bots are trying to remove offensive content before anyone sees it.

Every community that brings so many users together struggles with many pitfalls. Facebook realises that people will only be active on their platform if they feel safe. In May, Facebook published a 25-page document outlining their policy on community content safety and released a second, updated policy report a month ago.

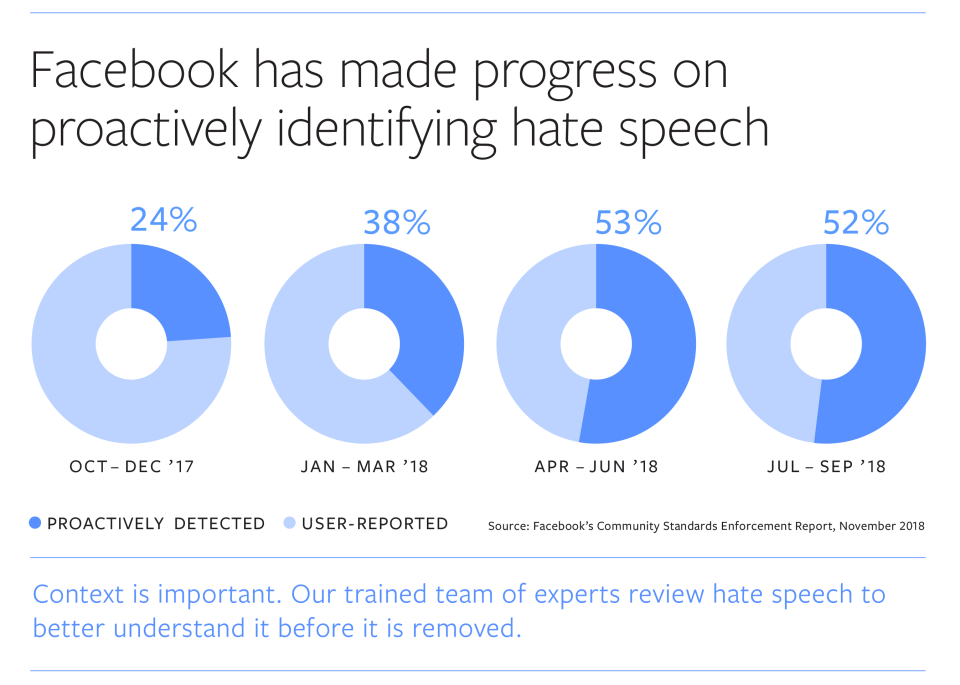

The number of detection of hate speech incidents has doubled from 24% to 52%. Facebook is constantly trying to find content that violates community standards before someone reports it, and hate speech is what most of the posts are.

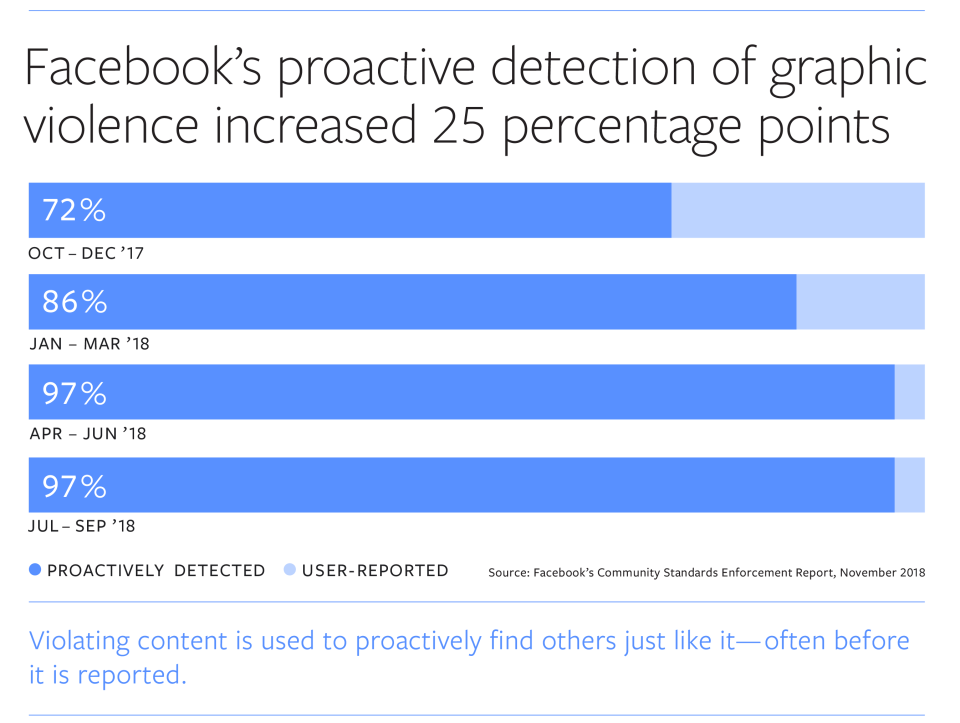

Facebook has improved not only in content removal but also in recognizing accounts that violate its standards. In the third quarter of 2018, Facebook took action against 15.4 million instances of violent and graphic content. This increase in success is due to the continuous improvement of Facebook’s technologies. In the second and third quarters, Facebook recognised and immediately closed many spam accounts just a few minutes after their registration.

The next step is to introduce new child protection categories, including:

- Bullying and harassment

- Child nudity and sexual exploitation of children

Facebook is deeply involved in these new security categories. It also very often removes innocent photos, like children in the bath, published by parents who have good intentions but who don’t realise that someone could abuse them in another context. In the last quarter, Facebook removed 8.7 million cases of child-related content and introduced a new technology to combat child exploitation.

Mark Zuckerberg also addressed the issue:

Zveřejnil(a) Mark Zuckerberg dne Čtvrtek 15. listopadu 2018

If you want to read the entire report, click on the following link.